Nathan recently whipped up this promotional image using a series of in-game screenshots layered together in Photoshop. Sarge sure looks annoyed at that shark missile!

Space Dust Studios

Developer Blog

Page 4 of 6

Progress is continuing at a rapid rate with the gameplay, environment and vfx improving every day. Also – if you missed our last post showing early game-play footage, be sure to check it out here, but bear in mind the game looks a lot better now!

GAMEPLAY UPDATE

We took our Space Dust Racing milestone build to the IGDA Melbourne pitching night and got some great feedback. About 30 people wandered over and joined in the 4-player mayhem, and offered some great suggestions on things we could improve and their favourite elements.

Playing Space Dust Racing @ IGDA Melbourne meet, complete with scrappy piece of paper showing controls affixed to side of laptop

New features we’ve added include the ability to ram other vehicles off the track, auto-aiming laser sights for the turret gun, slow but sneaky homing on the smart missile, a passive shock shield which protects you from attacks and also acts as a melee weapon, and a more forgiving revision of the vehicle physics to ensure players remain in the game for longer. And thanks to Unreal Engine 4.3’s splines, we’ve added spline data for the race track, which has finally allowed us to create a silky smooth camera behaviour and easily give the AI racers some rudimentary knowledge of the track.

CLAWTOPIA

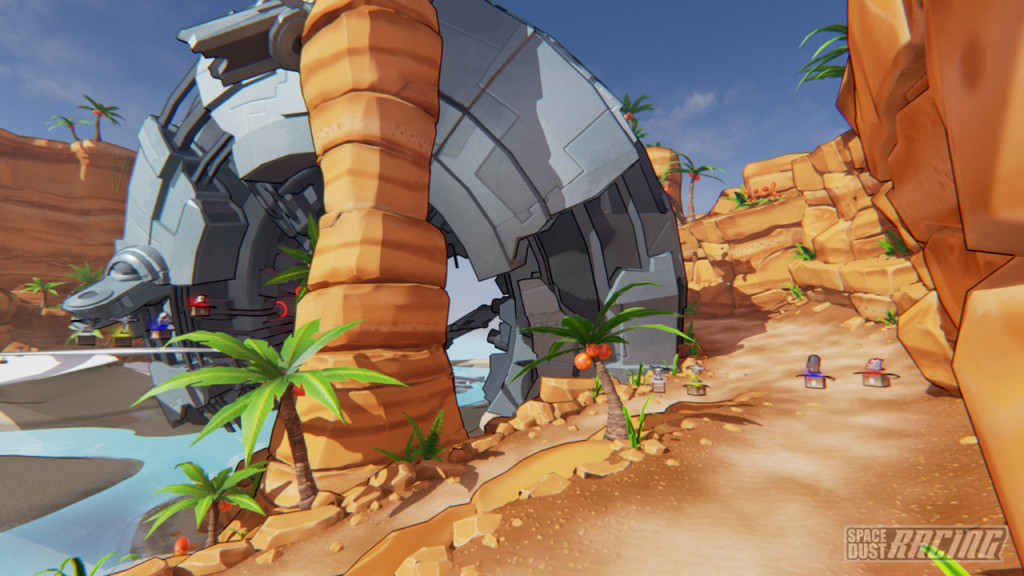

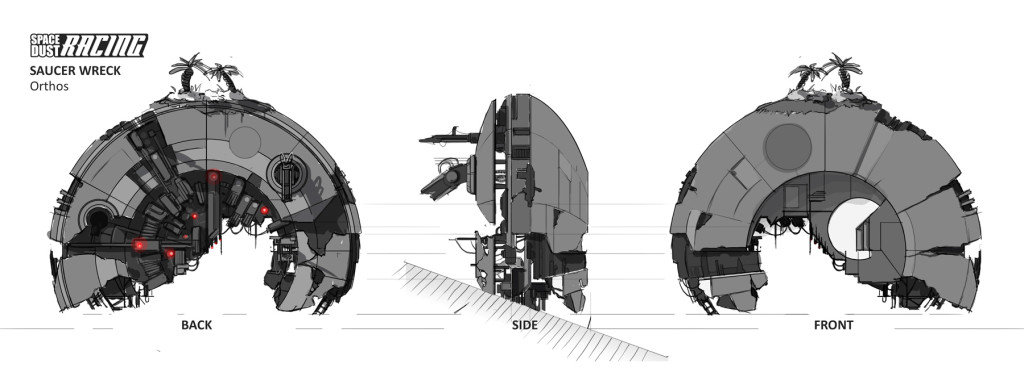

Clawtopia is feeling more and more like the alien tropical island we imagined in the early concept art. Pops has been heading up the creation of the terrain sculpting, plant creation/placement and it is really coming to life. As final gameplay adjustments are made to track and obstacles, the remaining white-box assets will be replaced with final art and textured mesh.

The vision for Clawtopia was that it should feel familiar but also alien, and that vibe is really starting to materialize. Obviously the enormous crashed flying saucer helps a bit!

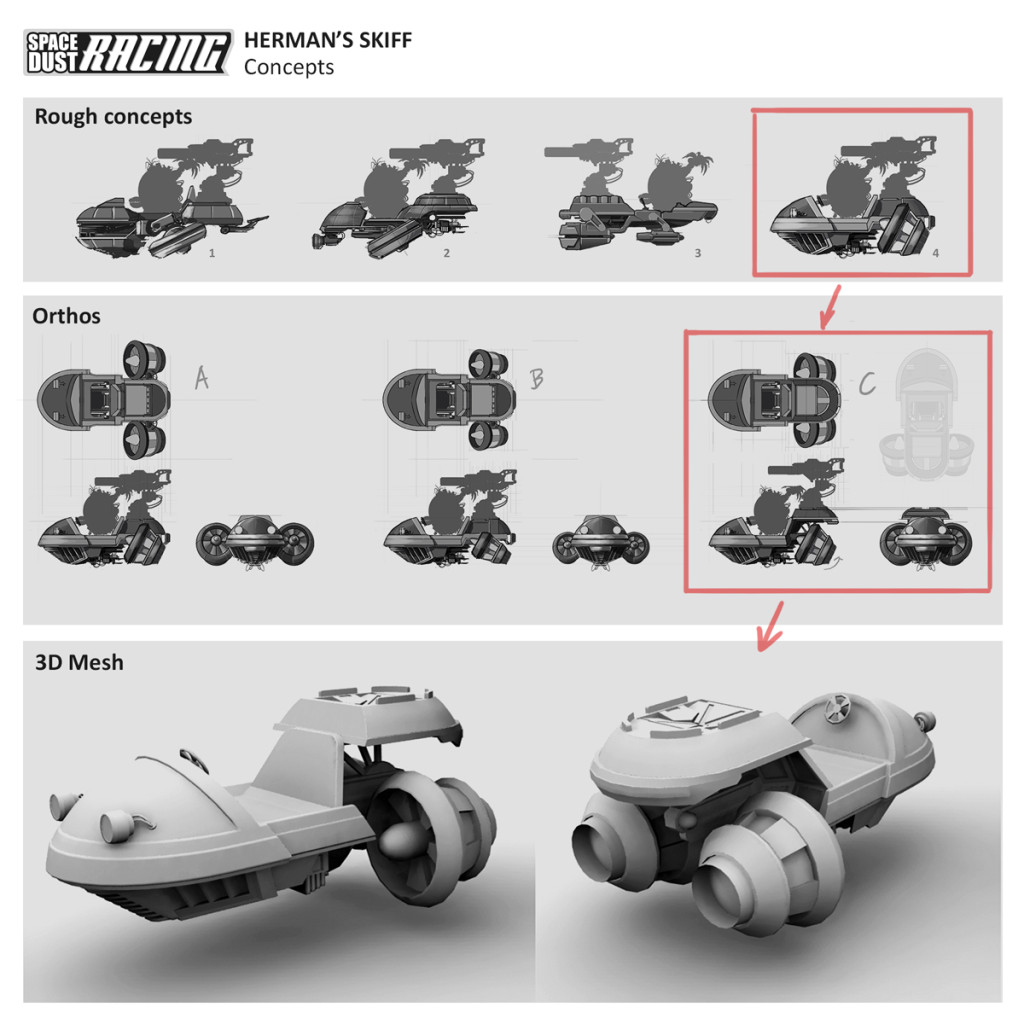

HERMAN’S SKIFF

Clawtopia is Hermans’ home world, so unsurprisingly Herman will be the next Space Dust Racing character to be built. However… Grigs is first building out the geometry for his vehicle, which in keeping with his character is a fishy inspired hovering jet powered skiff (phew!). Several rounds of concepts were created from which the final design was refined to fit similar proportions to Sarge’s tank – you can see the early progress below.

Well… that’s it from us for this development update. Is there any part of the game you’d like us to talk about? Leave your thoughts below in the comments.

7 players, 5 locations & 2 months dev…

It has been crazy busy at Space Dust Central! We hit our first major milestone at the beginning of the month, and have been working hard toward our complete vertical slice. In keeping with our plan of open ongoing Space Dust Racing development, here’s some early gameplay footage.

This play-test footage from the 28th of June was recorded directly from the game with 7 players over 5 different locations (online multiplayer). Glen also recorded our Google Hangout audio stream which I overlaid on the footage. While it is still early days (just 2 months of dedicated development!), we’re really happy with how fun it is to play already. And it is totally fitting our end goal of couch racing chaos!

We plan to have a larger general update shortly, though I’ve included a recent screenshot above as we’ve moved on since the footage was taken. As always, please post comments and we look forward to showing you more soon!

Unity vs Unreal: Choosing an engine for Space Dust Racing – Part 3: Source, Community and the Future

In case you missed them, you can check out the first two parts of Unity vs Unreal: Choosing an engine for Space Dust Racing at Part 1: Adventures in Unity and Part 2: The Unreal Deal.

Source release

Before I go any further, I have to talk about the release of the source code. In combination with all of the features Unreal Engine 4 offers, I believe having access to the source in such an accessible way is one of the most important, advantageous and exciting breakthroughs – it pretty much benefits everyone involved, even if you don’t use it directly.

Epic’s code gets reviewed and analysed for bugs and functionality. Desired core features are discussed and/or implemented by contributors. It becomes an even more exciting engine from an academic and teaching point of view, especially for game programming courses.

As for us, we massively reduce our dependency risk knowing that we can can streamline our workflow and fix time sinks, as well as much more efficiently debug and fix any issues, be it game, engine or editor side.

It also means we are in a position that we can implement any feature we require, which removes the “the engine can’t do this, so we can’t do that” factor.

And then there is the development community…

Development community

Even though Unity has a source code license, the community in general does not have access and those who do are bound by Non-Disclosure Agreements (NDA). Unfortunately while this is the case, I think they are missing out on the advantages of a more opened source release.

Epic has stated in their legal FAQ that the EULA does not include a NDA and that everyone is able to freely discuss the Unreal Engine. This in itself is huge! But for us it is great as it means we can talk and blog about it and its source code.

“You are permitted to post snippets of Engine Code, up to 30 lines of code in length, online in public forums for the limited purpose of discussing the content of the snippet and not for any other purpose.”

– From the Unreal Engine EULA

The source release means that the development community as a whole is empowered to discuss, find and fix issues amongst themselves and therefore find solutions to almost any problem. Not only that but they can create free or paid middleware with a comprehensive insight into exactly how the engine works and interacts with their software. There is huge potential here.

We expect to see plugins or even modifications to the engine source go up on the marketplace or at least a GitHub somewhere. We will hopefully see the latest advanced techniques from SIGGRAPH and the like, as well as community improvements to key systems (networking, physics, audio, etc).

The blueprint system helps make it even easier for non-programmers to get involved and create even more advanced cool content. With the experimental Blutility allowing you to add functionality to the editor itself.

Modding

Since there is a low entry point to the editor ($19, assuming they don’t already have a copy), it might be feasible to use the editor to mod / build content for released games. A game could provide a plugin for the editor which would allow the other licensees to build new content for that particular game, using Unreal Engine. The modded content, once released, should then be useable by any end user. If the game EULA allows it, the content could be sold – paying the usual 5% royalty to Epic. I would guess that the Epic team will probably support the idea as they can sell more licences and broaden their development community.

From the Blackjack example. Half way there, just missing one thing..

Future proofing

Although in my opinion, many key UE4 systems are ahead of the competition, they are actively improving the engine as well as adding new and exciting features. Because of the access to the source via GitHub and the fact they are continuously integrating their internal Perforce changes almost directly into the developer GitHub, we all get a sneak peek into what is forthcoming for the engine. Thankfully, they also like to talk about most of them on their Twitch stream, YouTube channels and forums. Again, this helps us keep track, so that we can better schedule work on our end to coincide with progress and releases on their side.

When UE4 was first released, Linux client support wasn’t there yet but you could see it was not far off (and later came out with 4.1, with SteamOS support). However, Linux editor support was another matter. Soon enough the community jumped on board and started standing up the editor for Linux.

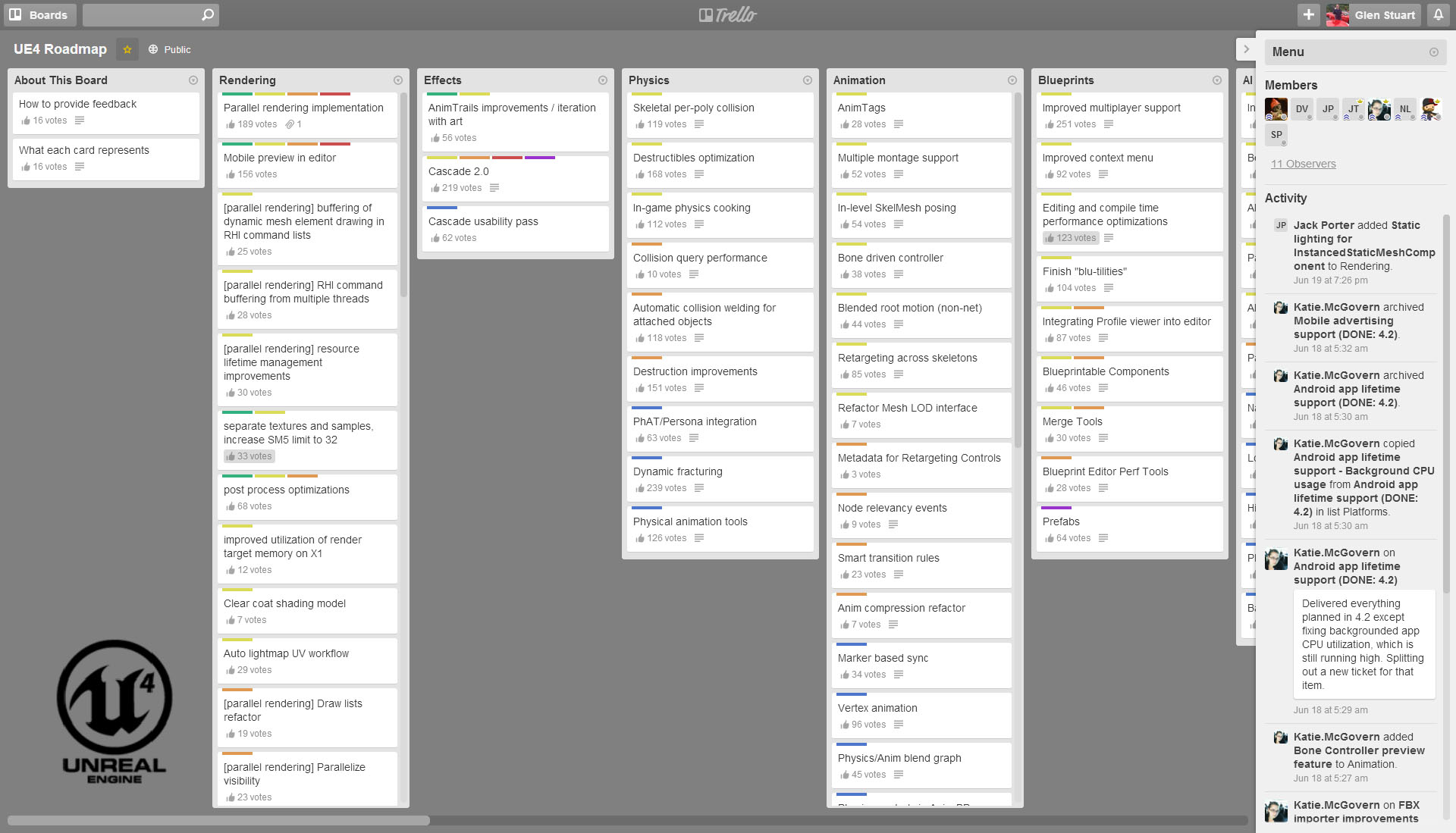

Even better still, Epic has now released a roadmap in the form of a Trello board for the engine development and direction in general. You can go there and vote on the items most pressing to you and your team.

Super exciting screenshot of the Trello board.

Todo

One concern for Unreal Engine I have is the frequency we seem to need to jump in and modify engine code for situations we wouldn’t expect to need to do so. While it is sometimes tolerable for team changes, it becomes a lot less ideal when you want to distribute the changes. I imagine this will become more of an issue when the Marketplace really comes to life.

An example would be that if you have a third party library you need to include into your project or into a plugin, currently you need to manage and copy the DLLs around manually after packaging builds of your game. The engine avoids this (for Ogg, PhysX, etc) by hard-coding its requirements into .Automation.cs files such as WinPlatform.Automation.cs, which has a comment that I totally agree with:

//todo this should all be partially based on UBT manifests and not hard coded

Similarly, if you have additional non-asset files such as help or HTML project files, you need to manually copy them during the deployment stages.

I hope before their store/Marketplace really kicks off that some of these issues are addressed as integrating hundreds of engine hacks or changes during updates will get quite messy.

The good news is we can solve these issues!

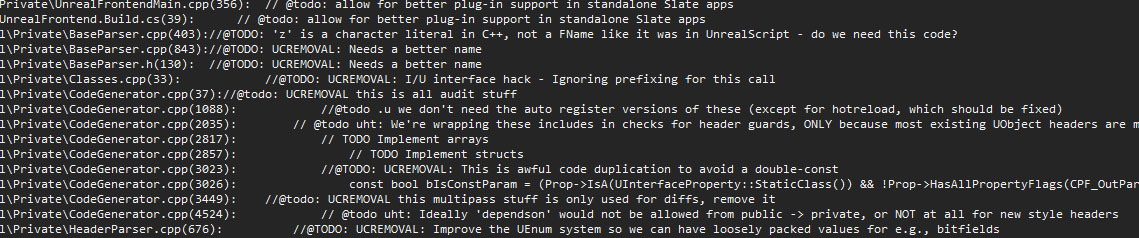

Miscellaneous todo comments from the source code.

Knowledge base

Another plus for us using Unreal Engine that I should mention is the number of experienced developers out there. There are plenty of engine systems which haven’t changed a great deal and have been used by many people over the years in UDK/UE3 and prior iterations – systems such as the networking/replication, basic material nodes, Cascade, Matinee, etc. This all ends up meaning it will be easier to hire or consult with people that have knowledge and experience with Unreal technologies.

Here in Australia, I know the team up at 2K Australia are using Unreal Technology for Borderlands: The Pre-Sequel! and have done so with many of their other games over the years, with a group of former staff breaking off and starting Uppercut Games – also using Unreal. I’ve seen a bunch of great tutorials from Pub Games for both UDK and now UE4. Recently I came across a couple of studios with some roots in the AIE Incubator Program such as Dancing Dinosaur Games, Daybreak Interactive and Wild Grass Games. There are a lot more game studios here doing unreal work, such as Canvas Interactive, Commotion Games, Epiphany Games, Reach Game Studios, Mere Mortal Games, Organic Humans and I’m sure there is a ton I have missed.

In addition to all of those, there are also non-gaming groups using Unreal Engine 4, such as this group here in Melbourne working on sensory therapy for dementia patients (Opaque Multimedia).

That royalty

Assuming that a game does well, in most cases Epic’s 5% cut will mean that long term Unity with a bunch of plugins would still generally be the cheaper option. But really, under the same assumption you will be laughing either way.

When starting a project, it is worth doing the research and forecasting to work out where you expect to be for your project. From the start Epic has advertised and welcomed custom licensing terms to reduce or remove the royalty, if that better suits your project.

“If you require terms that reduce or eliminate royalty for an upfront fee, or if you need custom legal terms or dedicated Epic support to help your team reduce risk or achieve specific goals, we’re here to help.”

– From the Unreal Engine FAQ

In the end

Recently on Develop I read that Unreal Engine 4 made spot #2 and Unity 5 made spot #3 in the Top Tech list. This goes to show that in the end they are both great engines, each offering its own different advantages and trade-offs.

As for us, we found that for Space Dust Racing – Unreal Engine 4 aligned just that bit better for the direction we wanted to go and decided we will give it a run for its money.

I hope you enjoyed this short series into Unity and Unreal Engine! Are you using Unity or Unreal Engine? If so, how are you finding the tech so far for your needs?

In case you missed it, you can check out the first part of Unity vs Unreal: Choosing an engine for Space Dust Racing at Part 1: Adventures in Unity.

Unreal GDC

Three of us made it over to GDC this year and after having spent the previous few days participating in the speed dating of game development (also known as Game Connection America), we were leisurely wandering around the GDC expo halls.

We had a chat to the guys at the Unity booth, showed them one of our playable prototypes, then went and said hello to a friend in the Qualcomm booth. Next we stared for a while at a demonstration Allegorithmic had on their Substance workflow, followed by quick laugh at Goat Simulator. Soon after we spotted an odd looking sign on the back of some unknown booth which basically said:

Get Unreal. Full engine and source. $19/MO +5%

After a few exchanges of WTH, we walked around to the front. Sure enough it was the Epic Games’ Unreal Engine 4 booth with a ton of people gathered around, and as many staff members eager to answer the onslaught of questions. After a few more exchanges of WTH with the staff, we joined the queue and had a look at the presentation they had going on in the back. Yep, looked legit. Fast forward a couple of hours to the hotel room, where we had signed up and downloaded a copy to start checking out.

A collection of snapshots taken while in San Francisco attending GameConnection and GDC 2014.

Rendering

For us, when compared to Unity, probably one of the biggest assets Unreal brings to the table is its rendering technology (or networking or source – it’s close). It features modern physically-based shading, full-scene reflections, TXAA, integrated GPU particles, an efficient and easy to use terrain system (including foliage and spline tools), as well as a host of other desired features. Most features are easy to use, efficient and importantly – well integrated.

A good example would be the environment reflection captures that ‘just’ involve placing primitives (sphere or box) into the scene, they are then captured and at runtime are selected, (approximately) re-projected and blended together in a similar screen-coverage-determines-cost way as the rest of the deferred rendering pipeline. In addition they are also blended with dynamic screen space reflections and use the lightmass data to reduce leaking. All in all a pleasurable system to work with.

Another example might be the particle system. The GPU particles are embedded within the standard particle system and support most of the standard particle features, but also feature screen space collisions, vector fields and plentiful numbers of particles. They have also made a good effort to do complexity-decoupled particle lighting.

Materials

Underlying most of the graphical content is the material system. It is a visual scripting/node graph based interface that covers most of your needs from static and skeletal mesh materials to particles and post processing effects. In order to keep things clean, you can create material functions to group node logic and then expose it in a simpler form. You can also write custom material expression code snippets if you need to use advanced shader features or just want to simplify your node graph.

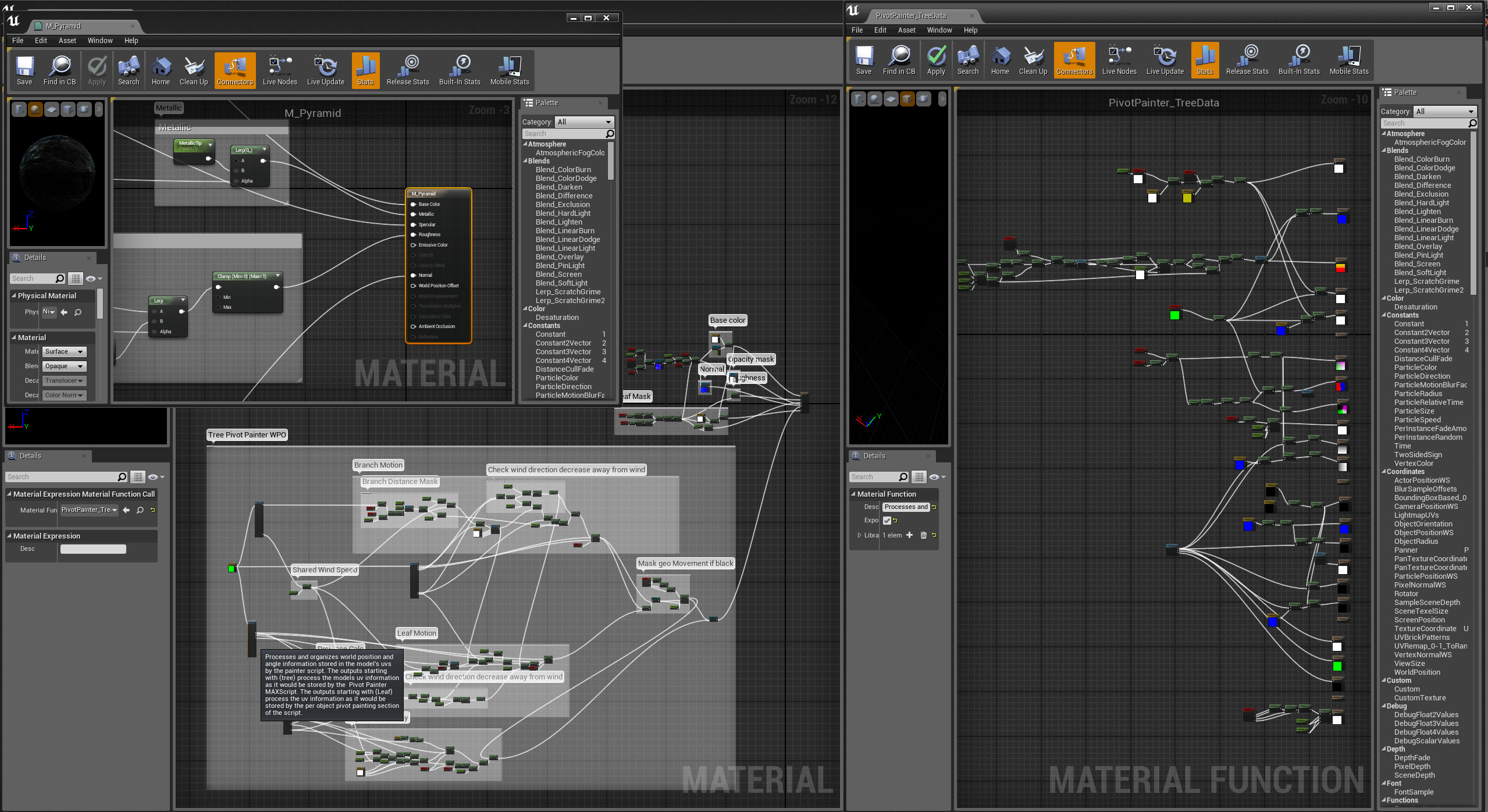

The material and material function editors.

The completed material graphs are first compiled down to HLSL or GLSL, and then get included by template shaders which basically pick and choose which part of the material code should be included for each type of shader required. It is then fed to the device to be compiled further, resulting in fairly efficient shaders. They have included an option within the material editor to view the generated HLSL code.

If you need to do something more advanced, such as hand optimize, use compute shaders or supply constant arrays, it gets a little less ideal as you’ll need to jump in and start modifying the engine code – however that option is there (first world problems, eh?).

Renderer WIP

Other parts of the renderer can be a bit lacking, missing or just generally incomplete. A single reflection capture is used for each translucency object and it picks it by a simple distance-to-center heuristic, without taking the influence radius into account, which can easily end up selecting non-ideal cubemaps.

In another case we had set out to test the foliage instancing with a few boulder meshes. We followed the lightmap requirements but couldn’t get the lighting to match – only to find that the UInstancedStaticMeshComponent::GetStaticLightingInfo function was empty.

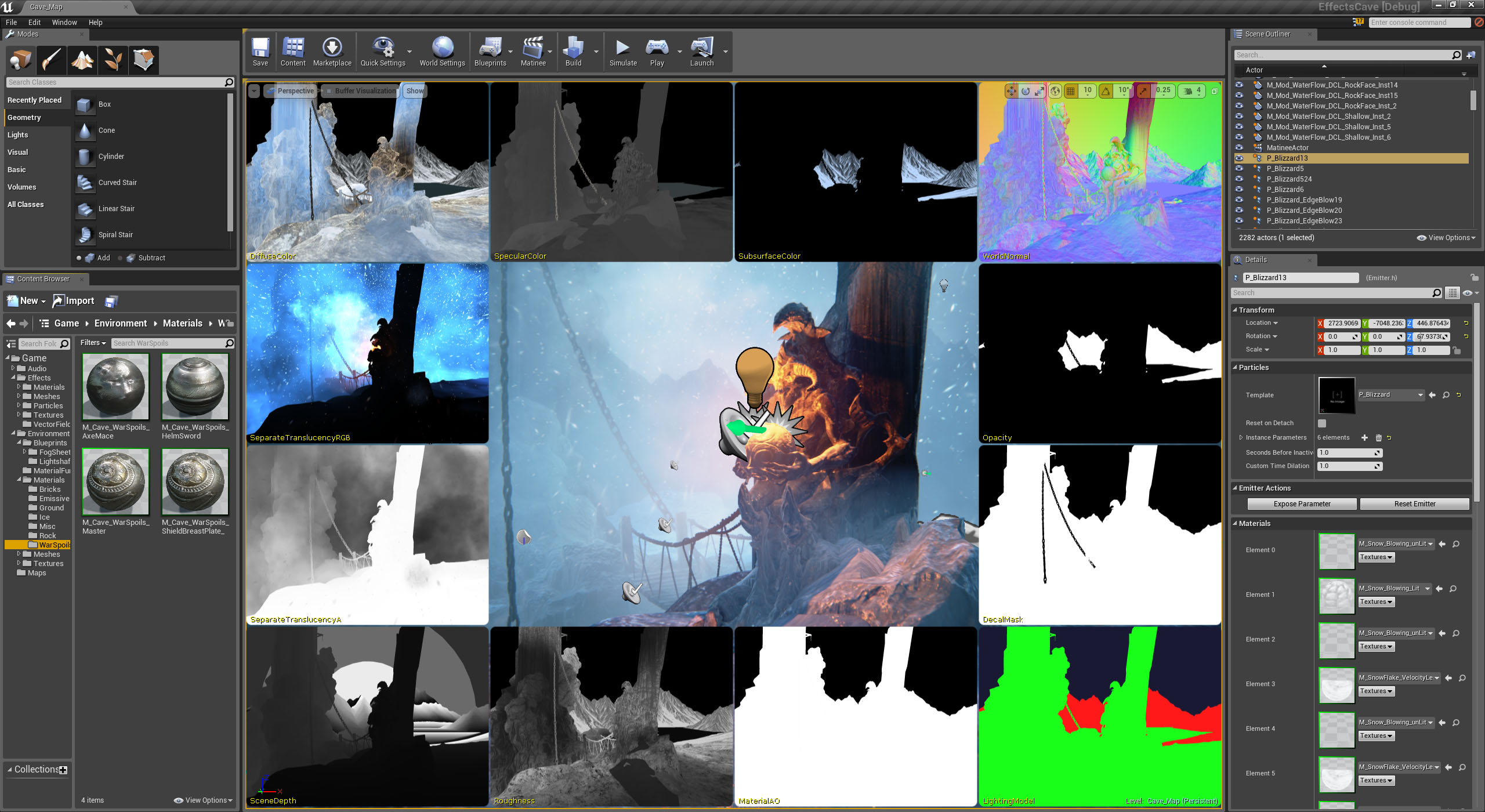

Unreal Engine 4 visualizing a bunch of the rendering buffers.

Networking

Another huge plus for Unreal over Unity to us, is its networking and replication solution. Its overall architecture has been proven over many iterations of the engine and with Epic Games’ Fortnite as well as many other upcoming games utilizing the current iteration, it should be just as solid.

Right out of the box they support dedicated authoritative servers, which most commercial online games require. Even better, they can be compiled to run under Linux. If you need to host a bunch of game instances on a server farm back-end you will see that Windows boxes are almost always much more expensive than Linux boxes using the same hardware. Microsoft’s Azure instances are about 50% more expensive using Windows than using Linux, whereas Amazon EC2 instances using Windows are almost double the price of Linux instances.

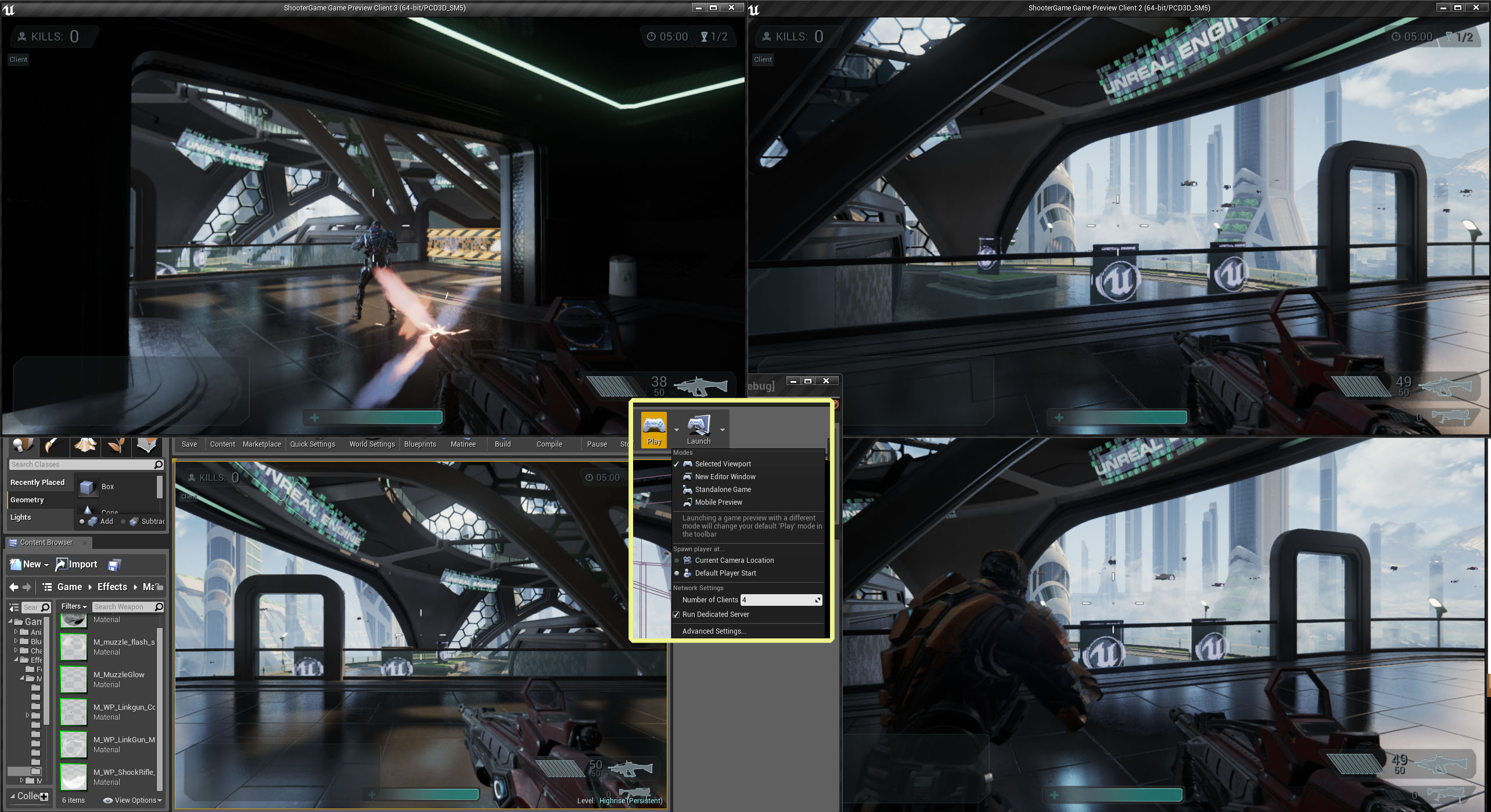

Another nicety is that they have made it fairly easy to run dedicated servers and test multiple users on the one machine, all from within the editor.

Who needs friends? Four way multi-player on the one desktop.

Networking under Unity

Under Unity, from what we could tell, most people ignore the builtin solution (for a bunch of reasons) and instead go for a third party option such as Photon or uLink, but there is a whole spreadsheet of alternatives that the community has put together for comparison. If you want a C++ server, you will be looking at writing your own plugin for Unity and the back-end server yourself.

The solution we were leaning towards was not really ideal. It did support dedicated authoritative servers where we could run our fundamental physics and gameplay simulation on the server, but as it was written in C# this meant we needed to use Mono under Linux and we had been warned that we would see noticeably reduced performance compared to Windows. At the time, gauging by the forums this approach was also mostly untested on Linux. Because of these reasons, as well as the upfront/ongoing costs involved and a bit of misalignment with what we really needed, we budgeted for writing our own solution.

At that stage we also re-evaluated UDK/UE3 because of it’s networking framework, however due to cost and engine limitations we decided against it. Now that UE4 is out, it solves a lot of those issues for us.

Networking resources

If you want to learn more about the basics of Unreal’s networking and replication under Unreal, they have a great long existing UE3 documentation page, as well as a smaller UE4 page and a Networking Tutorials playlist that uses their Blueprints system (though watching those videos, I was a little concerned that the server side of the blueprint might ship with the client, which is far from ideal. I am not sure that is the case however).

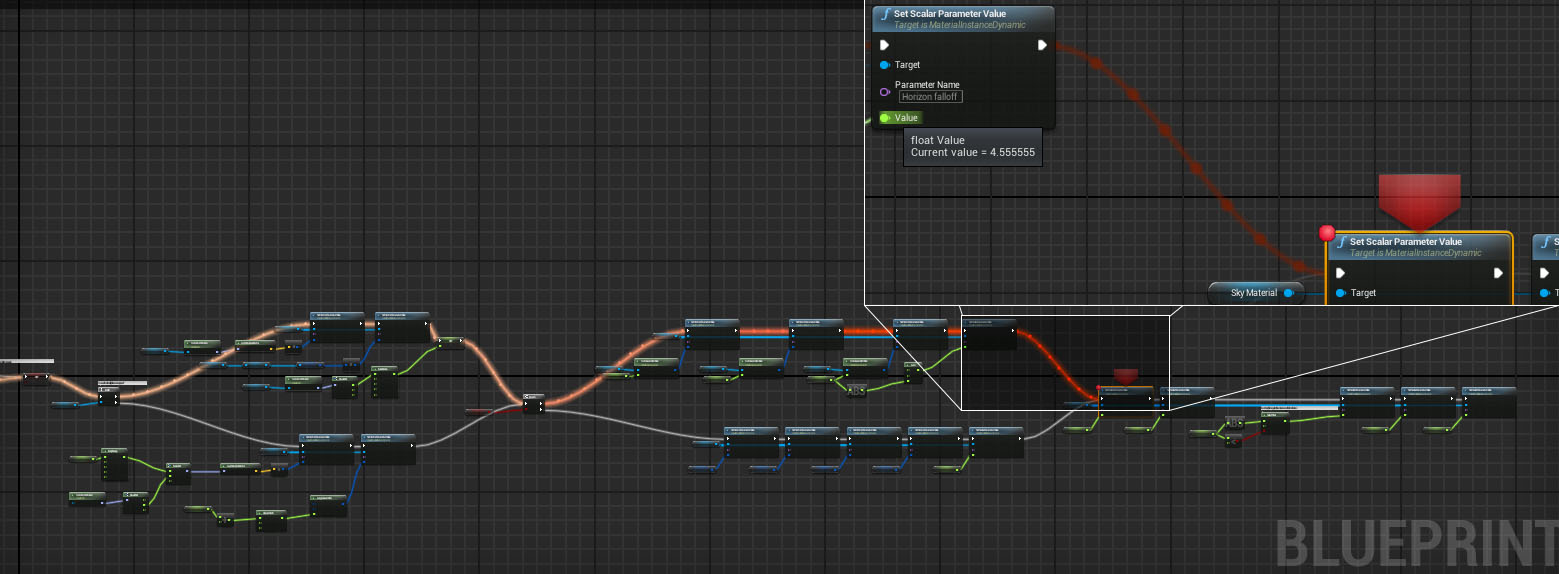

Blueprints

Blueprints would have to be one of the better, well integrated, visual scripting languages I have used. It is fairly clean and easy to use but most of all it would have to be due to their runtime visual debugger. Debugging a visual language without one can be painful. It is also great to see that they are continuing to add features to make the workflow that much better. For anyone struggling to learn C# under Unity – Blueprints could potentially offer the answer.

I have heard that they are roughly a similar cost to UnrealScript at about ~10x slower than writing it in C++ but used correctly that might be mostly mitigated. However, it does make me wonder why they aren’t compiled down to C++ and then ran through an optimizing compiler, in a similar way to the material networks.

If only the links were depicted as strands of spaghetti, we could redefine spaghetti code.

Sample projects

Worth a mention would have to be the amount of high quality, varied, meticulously maintained, example projects that have been coming out of the Marketplace. While learning the engine, I would often load up and refer to the Shooter Game, Strategy Game or Content Examples but I also spent a good amount of time looking over their Elemental Demo and Effects Cave, as well as most of the other projects. Although we aren’t using the vehicle physics released in UE4.2, the Vehicle Game is another top example.

They are all definitely worth a look and you can find supporting documentation on their site.

The rest?

Most of the other core built-in systems such as NavMesh, audio, streaming and the animation tools for Unreal look solid (if not a little FPS/TPS orientated). It also includes CSG tools which are handy for prototyping/blocking out levels.

The Windows XInput implementation is currently missing vibration support, otherwise it works well. They have included a nice plugin framework for adding additional input modules.

The experimental behaviour trees look good. The cinematics tool, Matinee, is cool but is looking to be superseded eventually by a newer system called Sequencer. They are also in the process of improving their UI systems by adding a WYSIWYG system called UMG (Unreal Motion Graphics). It will be built on top of Slate which is used most often for game UI but is also what the Editor itself uses.

I had better leave it here for this post but will cover the rest of the rest next time, including some of the key considerations.

© 2024 Space Dust Studios

Theme by Anders Noren — Up ↑